Understand positional encoding in Transformers, from sinusoidal embeddings to RoPE, and learn how models capture token order and relative position.

I’m fascinated by how we encode word position inside Transformers.

If you remember a few years ago, when people were still mainly talking about recurrent networks like LSTMs, position came “for free” from the sequence itself: hidden states were built step by step, so the model naturally carried a notion of order through time.

In pure Transformers, that sequential recurrence is gone. Attention is parallel.

So without positional information, a model would treat token sets as if order barely mattered.

For an LLM, sentences like:

“The dog bites the man”

“The man bites the dog”

must not mean the same thing. The model needs position to know who is doing what to whom.

That is exactly where positional encoding enters.

Positional Encoding

In Attention Is All You Need, this is solved by adding a positional vector to each token embedding:

xpos=etoken+ppos

where:

: token embedding

: positional encoding vector for position pospospos

The original sinusoidal formulation is:

Intuitively, it is tempting to think “token 1 gets +1, token 2 gets +2, etc.”

But what really matters is that this is still mostly an absolute encoding of position.

And this creates a practical issue for long contexts: absolute positions can be less robust when we care about relative distance between words (how far one token is from another), especially beyond training length.

We need a way to represent position relatively.

That is where RoPE (Rotary Positional Embedding) comes in.

RoPE (Rotary Positional Embedding)

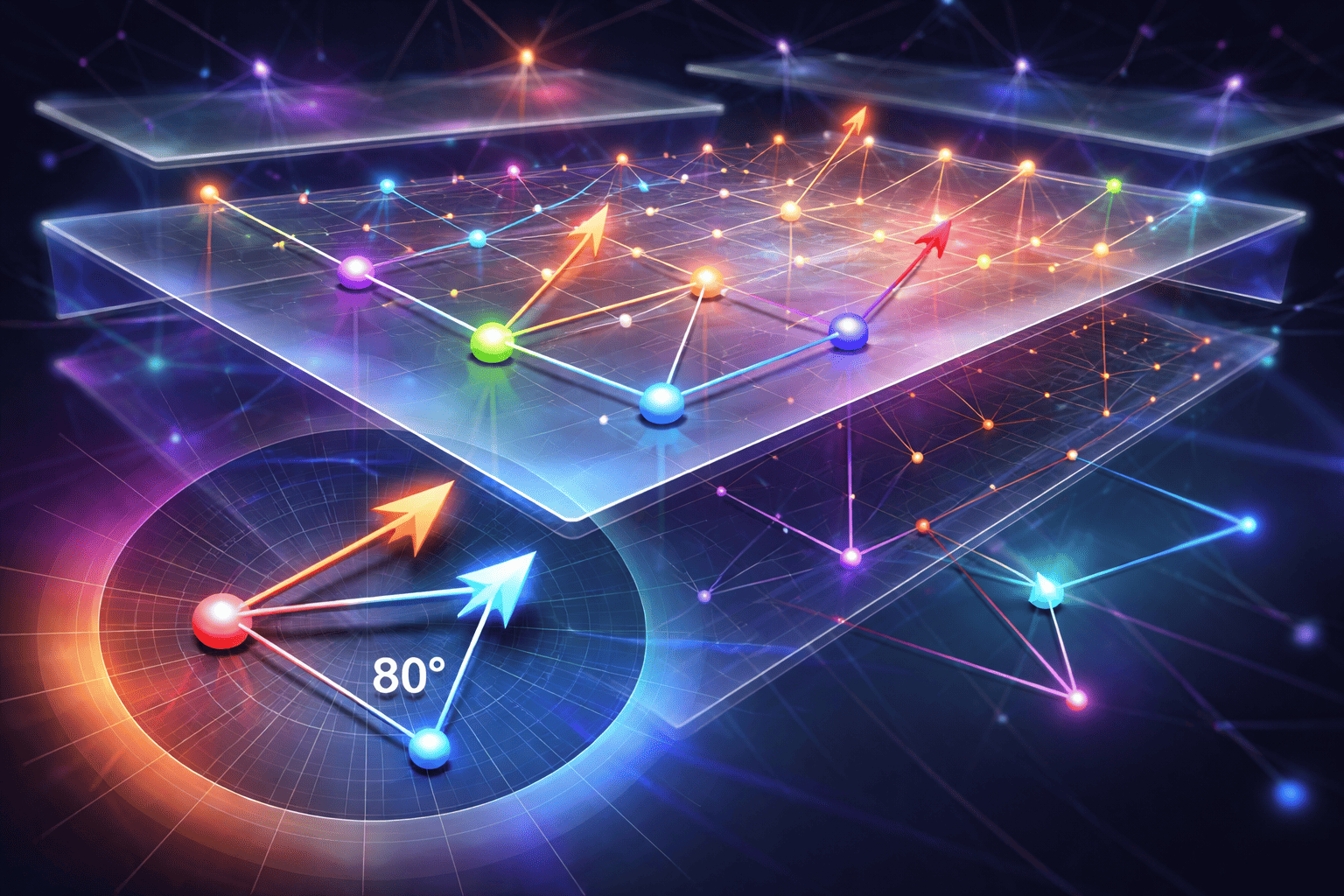

Before explaining the method, remember: tokens are mapped to vectors, and vectors are objects we can transform—add, multiply, and also rotate in vector space.

RoPE’s core idea is simple and elegant:

We rotate representations by an angle that depends on token position.

Imagine comparing the word at position 10 and position 15.

Position 10: rotated by

Position 15: rotated by

Difference:

Now imagine those same words appear later at positions 100 and 105.

Position 100: rotated by

Position 105: rotated by

Difference:

The absolute angles changed, but the relative angle stayed the same.

That gives two big advantages over older approaches:

Relative distance awareness

The model cares about angular difference (relative offset), not only absolute location in the sequence.Better length extrapolation (with caveats)

Because position is encoded as continuous rotations, models often generalize better to longer contexts than seen during training (not infinitely, but usually better than purely learned absolute tables).

RoPE explained mathematically

Now we go into the math.

I know many people dislike this part, but if we want to implement it properly, we need formal definitions—not only analogies.

Before formulas, let’s clarify where RoPE is applied.

RoPE is applied after creating Query and Key, and before computing their dot product.

Unlike old positional embeddings (added once at the input), RoPE is typically applied inside each attention layer.

The workflow (step by step)

Inside one attention head:

Input

Token representation arrives from embeddings + previous layers.Linear projections (create Q, K, V)

Apply RoPE to and (usually not to ):

Attention score (dot product)

Softmax and output

When we talk about algorithms within Transformers, I always love to get under the hood and see how they actually tick. Matrix calculations can be handled in countless ways; some are memory-efficient, others are processing-heavy, and finding the sweet spot is an art form.

In many cases, optimizing these operations into custom CUDA kernels significantly accelerates the training and inference of machine learning models.

Today, I want to highlight a specific Pull Request from the Unsloth repository—PR #238.

This PR focuses on a massive optimization of the RoPE (Rotary Positional Embeddings) algorithm, squeezing out even more performance from the hardware

Unsloth’s PR #238 Optimization

PR #238 is a performance-critical update to the Unsloth library that surgically optimizes the RoPE (Rotary Positional Embeddings) algorithm.

In standard deep learning frameworks, RoPE calculations are "memory-bound," meaning they waste too much time moving data in and out of the GPU's memory (VRAM).

Unsloth solves this by implementing a custom Triton kernel that fuses operations and handles memory "in-place."

The result is a dramatic reduction in VRAM usage and a significant boost in training speed, allowing large models to be fine-tuned on consumer-grade hardware.