MolFun

Open-source framework for fine-tuning protein structure prediction models

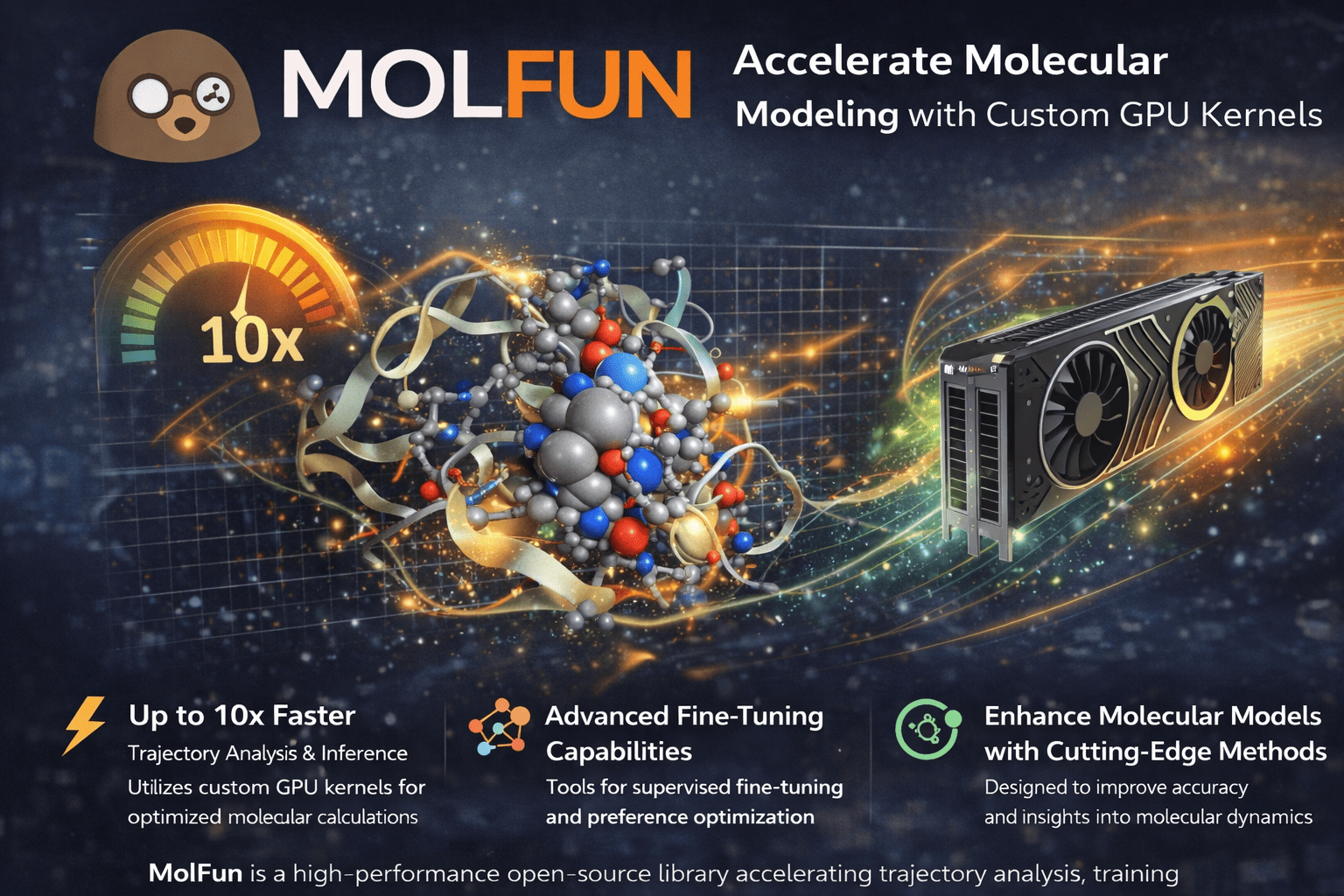

MolFun is an open-source framework for fine-tuning and adapting pre-trained protein structure prediction models like OpenFold to specific downstream tasks. Instead of training from scratch, researchers can leverage existing model knowledge while customizing for their particular molecular problems.

It offers four fine-tuning strategies — from lightweight Head Only (~50K params) to Full fine-tuning (~93M params), with LoRA and Partial options in between — each optimized for different dataset sizes and computational budgets.

Beyond fine-tuning, MolFun provides built-in experiment tracking with native integrations for WandB, Comet, MLflow, Langfuse, and HuggingFace — supporting composite tracking to multiple backends simultaneously.

The framework provides:

- •Four fine-tuning strategies (Head Only, LoRA, Partial, Full) for adapting protein structure prediction models to specific downstream tasks.

- •Modular architecture with swappable components via a registry system — attention mechanisms, block types, structure modules, and embedders.

- •Experiment tracking with native integrations for WandB, Comet, MLflow, Langfuse, and HuggingFace with composite multi-backend support.

- •Complete pipeline: data loading, MSA handling, featurization, and model export to ONNX, TorchScript, and HuggingFace Hub.

- •Experiment tracking with native integrations for WandB, Comet, MLflow, Langfuse, and HuggingFace.

MolFun makes protein model adaptation accessible and efficient, enabling researchers to fine-tune state-of-the-art models without building pipelines from scratch.

By combining modular fine-tuning strategies with comprehensive experiment tracking, MolFun bridges the gap between pre-trained models and real-world protein engineering tasks.

A framework where protein model adaptation is modular, trackable, and reproducible.

Features

Comprehensive fine-tuning and acceleration tools for protein structure prediction

Four Fine-Tuning Strategies

Head Only (~50K params), LoRA (~600K params), Partial (~5M params), and Full (~93M params) — each optimized for different dataset sizes, from under 100 samples to over 10K.

Modular Architecture

Registry-based system for swappable components: attention mechanisms (standard, Flash, linear, gated), block types (Evoformer, Pairformer), structure modules (IPA, diffusion), and embedders.

Experiment Tracking

Native integrations with WandB, Comet, MLflow, Langfuse, and HuggingFace. Supports composite tracking to multiple backends simultaneously for full reproducibility.

Complete ML Pipeline

End-to-end workflow: data loading, MSA handling, featurization, trajectory analysis, and model export to ONNX, TorchScript, and HuggingFace Hub.

Experiment Tracking

Native integrations with WandB, Comet, MLflow, Langfuse, and HuggingFace for seamless experiment monitoring and reproducibility.

Seamless PyTorch Integration

Built on PyTorch 2.x with native compatibility. Drop-in replacements for standard operations with automatic GPU acceleration and zero-copy tensor handling.